Process Health

Features

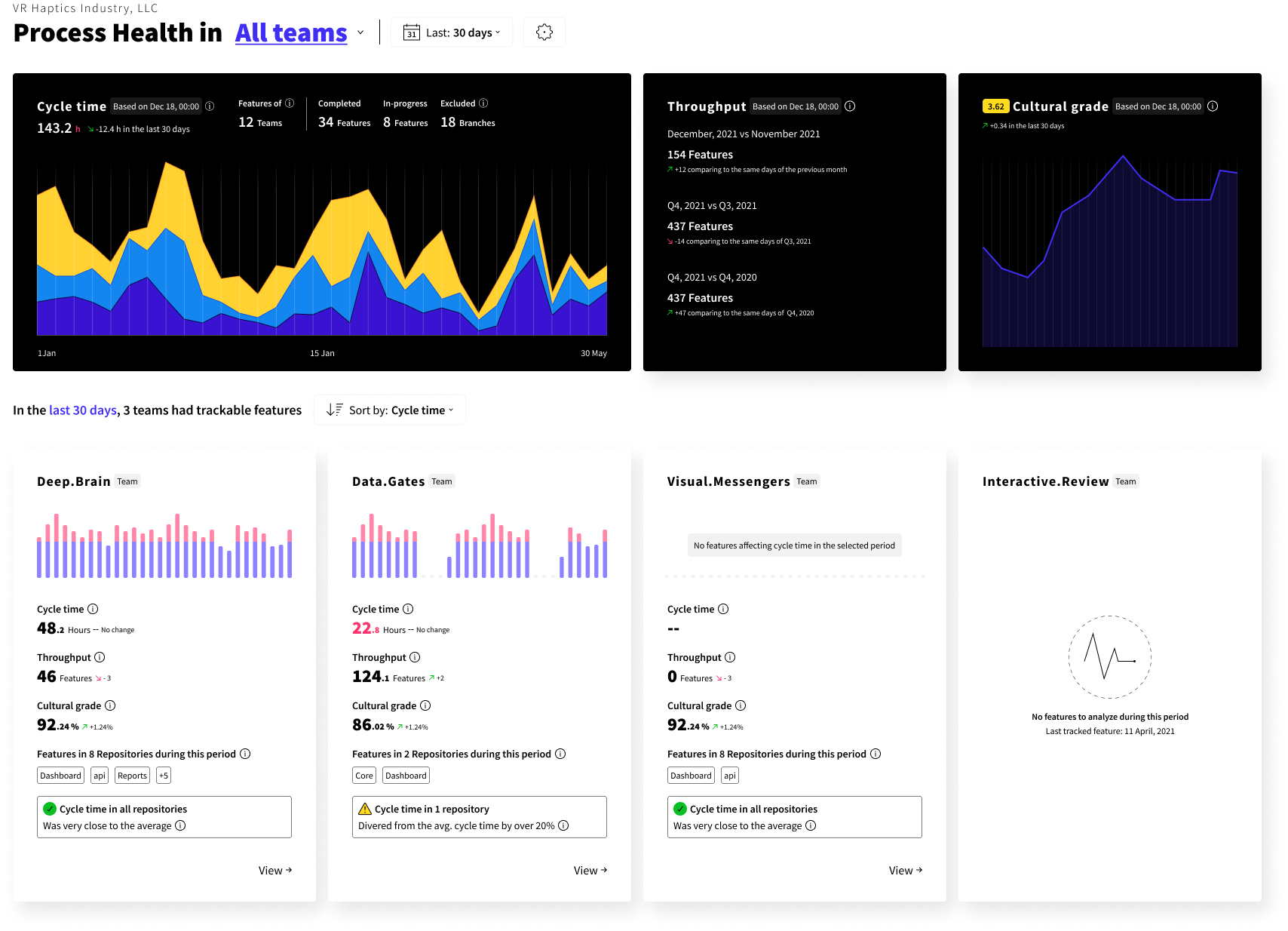

Process Health

Delivery flow is linked with the state of your codebase, processes your team use, and how the teams are structured.

Without analytics, it might be hard to identify high-value, systematic issues that affect the delivery flow of your teams the most.

Process health can help you understand:

How fast is your team, tribe, or entire organization?

Is our speed getting better in the recent weeks & months?

What causes the slower delivery flow - processes or the state of the codebase?

Which process or codebase is the most valuable to investigate & improve to gain the most benefits for the team?

What impact our recent refactoring work, process, or team structure changes have had on our delivery flow?

Measures how long it takes to deliver a change to the market. It is the average duration of all feature branches that were active during the selected period.

Duration is tracked from branch creation event (or first commit) to a merge into a target branch (usually develop or main, configurable in branching strategy)

To help spot process bottlenecks, cycle time is divided into stages:

Development stage - time between the first commit to an open PR;

Review stage - time from an open PR to an approve;

Merge stage - time from an approval to merge event.

To keep Cycle time realistic and useful some branches are excluded from the calculation:

Long-lived branches, i.e. develop or main;

Feature branches that are identified as stale;

Other feature branches or prefixes that you have manually added to be excluded.

Measures how many features were completed (merged into a target branch, i.e develop or master) during the period.

Throughput can help us understand a few things:

Seasonal volatility - is there a predictable difference between quarters?

Organization efficiency trends - our organization is changing, how is our unit-efficiency changing with the new processes and scale?

Value of a repository or module - the more time teams spend on the area, the higher the value for investigation & improvements.

Ease of use - the ratio between applied effort and the outcome is a valuable indicator. If we apply most effort in a module but the throughput is low, it is an obvious target for refactoring.

Culture filters track known anti-patterns that affect the delivery flow or workspace tidiness.

To conveniently spot trends across an entire organization or a tribe, Codigy wraps this information into a Cultural grade.

Cultural grade score can be from 1 (Not great) to 5 (Amazing💪) and is based on a share of feature branches that triggered cultural anti-pattern filters (benchmarks are adjustable):

Stale & Idle features

Feature branches that were inactive longer than defined in your benchmarks. Both filters work in a similar way:

Idle features are marked after a set number of hours of inactivity as a reminder to the team.

Stale features are marked and excluded from cycle time after a set number of days without activity.

Accumulation of such features reduces the throughput and limits potential value shipped to the end-user.

Oversized PR’s

Pull requests that have exceeded your benchmarks.

Based on the "Fits in your head" concept, oversized PR’s are harder to review and can lead to a higher chance of defects.

Overcrowded branches

Branches where the number of contributors has exceeded the benchmark.

Based on the assumption that small tasks with clear owners lead to a safer and faster process. Not immediately dangerous, but worth investigation.

Chaotic names

Branches that do not match the defined branching strategy.

Consistent naming makes the workspace easier to navigate for everyone on the team.

Branches waiting to close

Branches that were Merged into the target branch but are not yet Closed or were Closed after exceeding the benchmark time.

Done with the branch? Close the branch. A clean workspace is easier to navigate for everyone on the team.

Issues are the driver of innovation🥰 Codigy analyzes all feature branches. An issue is created if a feature branch shows signs of cultural anti-patterns or if it gets stuck in the delivery flow.

Issues are handy for:

Pinpointing a specific process or codebase area where bottleneck occurs;

Fuelling an informed discussion during sprint retrospectives;

Providing data for designing action points during retrospectives;

Validating that you have resolved a bottleneck or an anti-pattern;

Codigy supports all organizational levels. Each gives an overview, trends for various ranges, and allows you to go down to an issue-level for an investigation:

Entire organization (All teams or All tribes);

Specific tribe (Group of teams);

Specific team;

Issue list (For cycle stages and cultural anti-patterns);

Organizational levels give actionable data to owners:

CTO

VP of Engineering

Director of Engineering

Engineering manager

Team leads and their teams

Each can track & analyze their exact area of responsibility.

Process Health insights are included in the periodic reports so your teams could track their progress and access data to make informed decisions.

You can read about daily stand-up and sprint retrospective reports here.

To achieve high performance in large accounts, we employ the following logic:

Calculated & updated at the end of each day:

Cycle time

Stage time

Cultural grade

Calculated & updated in real-time:

Throughput

Issue count (both for cycle stages & cultural anti-patterns)

Issue details (both for cycle stages & cultural anti-patterns)

All Process health filters have benchmarks. They define what is considered to be an issue based on your internal culture and expectations.

Codigy comes pre-configured with default values. You can configure expected values for your cycle stages & cultural anti-patterns as global settings for an entire organization or use granular custom settings based on individual tribe or team.

Codigy needs a branching strategy to accurately calculate your cycle time & throughput.

Based on your configuration, Cycle time calculation will:

Exclude all long-lived branches such as main or develop.

Exclude prefixes listed in "Branch prefixes to ignore".

Exclude stale branches. If the branch was identified as stale during the selected period, it will be included and display the hours that impacted the period.

Merge stage will calculate the time before a feature branch merges into the Development. If you are using a trunk-based development without a Development branch, you can specify your main branch as the target.

All branches with prefixes or names that don't match the strategy will be treated as features branches (but marked as chaotically named).

The cultural filters are using the same principles but include stale features.

Same as benchmarks, you can configure a global policy for all repositories or create custom ones for each repository.

Have a question?

If you need a more detailed explanation about any of the Codigy metrics or mechanics - fire away in our community chat on Discord 👌

This page was last revised on February 16, 2022

Return to main